Greetings, fellow coders and tech enthusiasts. It’s the 7th and final edition of the INT. Hackathon Diaries V1.0. But don’t shed a tear just yet, we will be back soon with the next edition as our in-house masterminds are up and running with new and innovative ideas all the time.

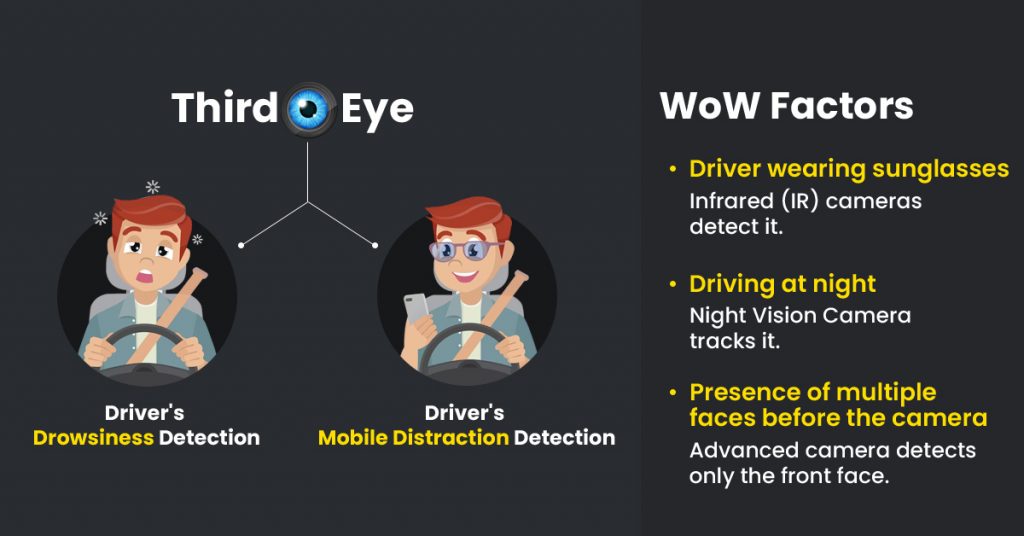

We’ve saved one of the bests for last, and it’s a project sure to keep you wide awake: The Third Eye – Driver’s Drowsiness and Mobile Distraction Detection Solution.

We all know how dangerous driving is when we’re tired or distracted. It’s like playing Russian roulette with our lives, and those of everyone else on the road. But fear not, as The Third Eye team has come up with a solution that’s so clever, it’ll make you wonder why nobody thought of it before.

Using the latest computer vision and machine learning technology, The Third Eye system monitors drivers in real time, watching for telltale signs of drowsiness or distraction. It’s like having a personal wake-up call or a stern aunt, reminding you to keep your eyes on the road and your hands on the wheel.

It is to create the leeway to a sustainable and protected world while driving.

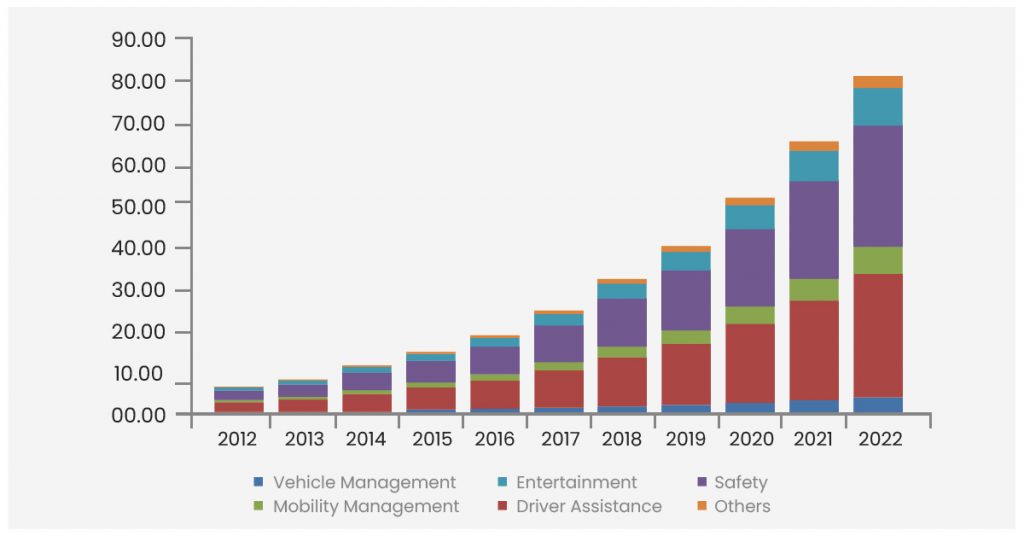

Per recent reports, the global market for drowsiness monitoring systems was valued at a staggering $2.2 billion in 2019 and is projected to grow to $3.3 billion by 2027 with a CAGR of 5.2% during the forecast period.

But that’s not all, folks. The global market for distracted driving prevention technology is expected to explode from $1.27 billion in 2019 to a whopping $2.9 billion by 2025, with a CAGR of 12.7% during the forecast period.

These numbers speak volumes about the urgent need for cutting-edge solutions that keep drivers alert and focused behind the wheel. So get ready to join the race to the top as we explore the latest developments in driver safety technology that are taking the market by storm.

Prepare the dataset: Collect and prepare data for drowsiness detection.

Augment the data: Improve the model’s performance by data augmentation techniques.

Split the dataset: Divide the prepared dataset into training and testing sets.

Configure the model: Customise the YOLOv5 model for drowsiness detection by modifying configuration files to specify hyperparameters, input image size, and a number of classes.

Train the model: Train the YOLOv5 model using the prepared training set and the configured model.

Evaluation: Measure the model’s performance on the testing set using evaluation metrics such as precision, recall, and F1 score.

Fine-tune: Adjust the hyperparameters and retrain the model on the entire dataset or a subset of it to fine-tune the model.

Deployment: Integrate the trained model into a mobile or web application for real-world drowsiness detection.

Data collection: Gather a dataset of images depicting instances of mobile phone distraction.

Data Preparation: Transform the annotations into a format that is compatible with YOLOv5, such as COCO or YOLO.

Model configuration: Set up the YOLOv5 model to recognize mobile phone distractions.

Model training: Employ a deep learning framework like TensorFlow or PyTorch to train the YOLOv5 model on the training set.

Evaluation: Test the trained model on the test set to determine its accuracy and performance.

Deployment: Deploy the trained model onto our device.

Alert mode: Once our device detects a driver using a mobile phone while driving, it will emit a continuous alert message until the driver puts down the phone.

Scene 1: Driver wearing sunglasses

Infrared (IR) cameras detect the heat signatures of objects, including human eyes, even when they are partially obstructed by sunglasses.

Scene 2: Driving at night

Night Vision Camera tracks the driver’s face and eye movements.

Scene 3: Presence of multiple faces before the camera

Detection of only the front face.

Stay tuned and keep your eyes peeled for the next edition, where we’ll bring you more cutting-edge solutions and innovations that are driving the industry forward. See you there.